This is the first guide in the AI Visibility for Shopify guidebook - a four-part series covering how AI systems find, understand, and recommend products from Shopify stores.

If you sell on Shopify, your products are competing for visibility in a new kind of search. ChatGPT, Perplexity, Google AI Overviews, and Gemini are answering product questions directly - with specific recommendations, prices, and links. The stores that show up in those answers are getting traffic. The ones that don’t are invisible.

This guide breaks down exactly how each of these tools works when a shopper asks a product question.

Five things you’ll learn in this guide:

- What happens behind the scenes when someone asks ChatGPT to recommend a product

- How Perplexity retrieves and presents product information differently from ChatGPT

- Where Google AI Overviews pull their product recommendations from - and why 83% of cited pages aren’t in the traditional top 10

- How Gemini, Bing Copilot, and voice assistants fit into the picture

- The one pattern all AI shopping tools share - and why it determines whether your store gets recommended

A Product Query in ChatGPT

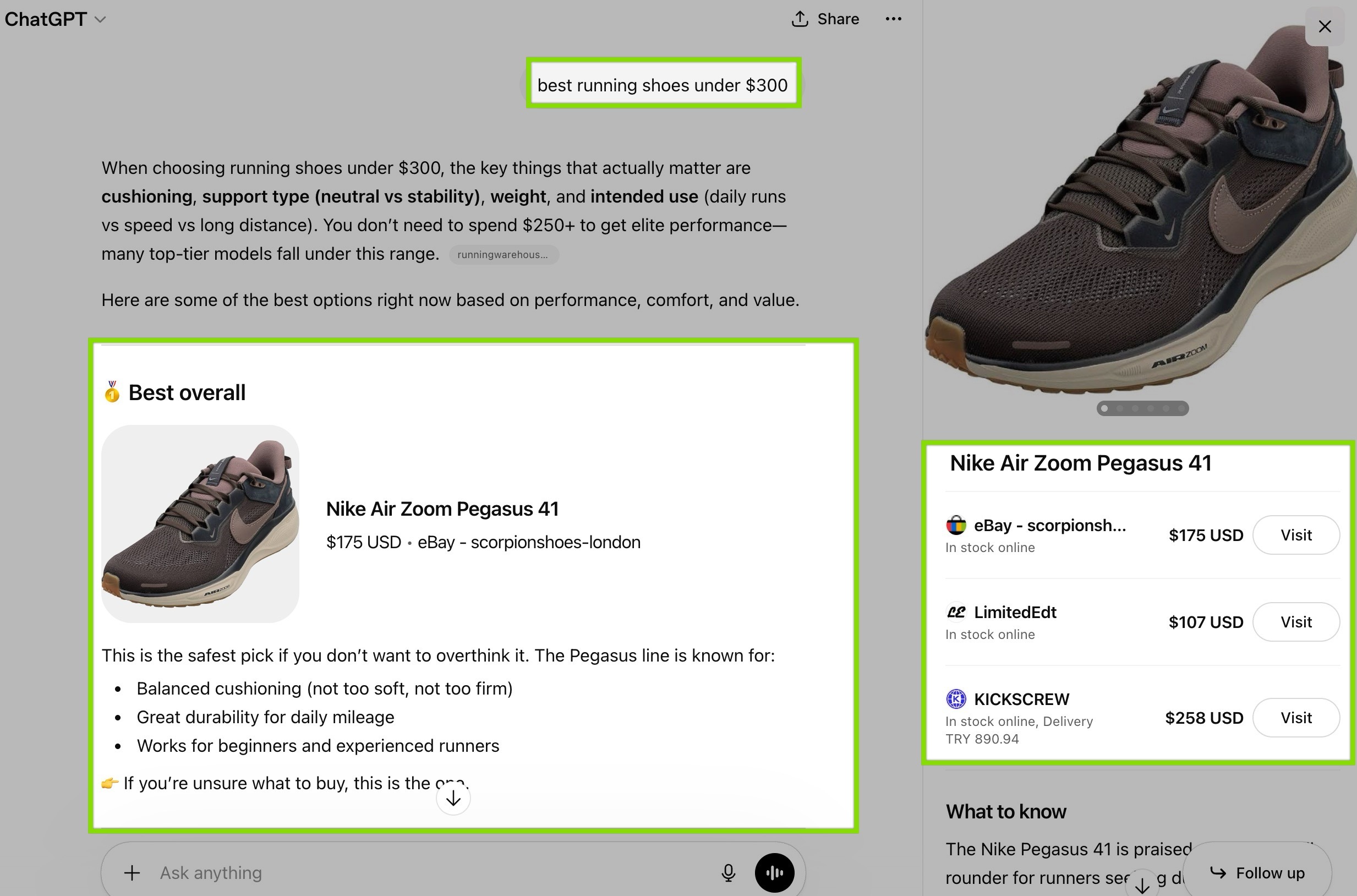

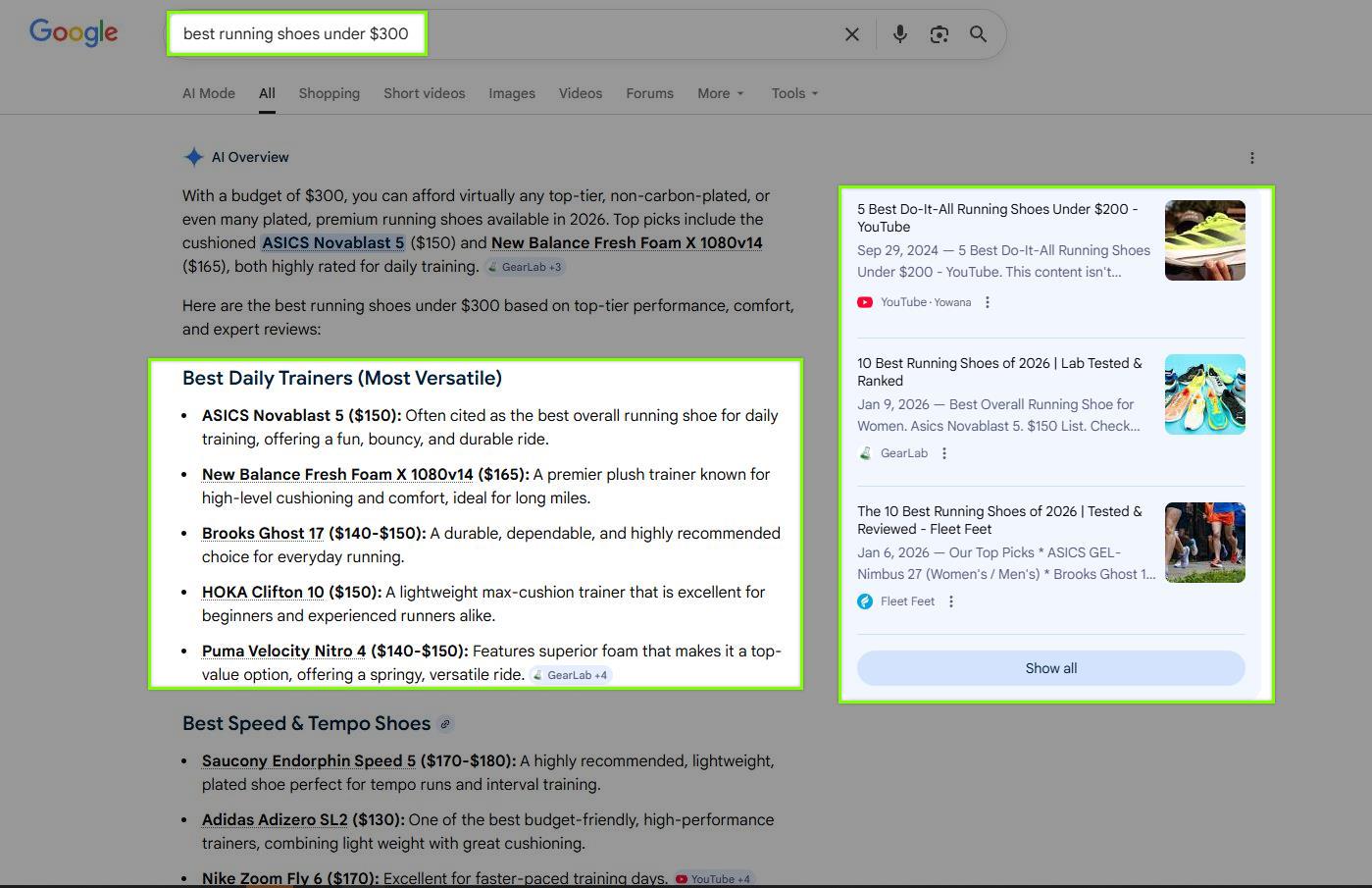

Someone types into ChatGPT: “best running shoes under $300.”

What happens next is invisible to the shopper but critical for your store.

ChatGPT doesn’t answer from memory alone. For product queries, it triggers a live web search using a crawler called OAI-SearchBot. This crawler visits pages across the web, pulls content from them, and passes that content back to ChatGPT’s language model. The model then reads through the retrieved content, identifies relevant products, compares them, and generates a recommendation.

The response the shopper sees typically includes:

- Specific product names with brief descriptions

- Prices and key specs

- Reasoning for why each product was recommended

- Source links the shopper can click to visit the original pages

Here’s where it gets relevant for your store.

ChatGPT’s crawler doesn’t render JavaScript. Research by Vercel and MERJ tracked over half a billion GPTBot fetches and found zero evidence of JavaScript execution. That means if your product data - your schema markup, your FAQ content, your structured product attributes - only appears after JavaScript runs on the page, ChatGPT’s crawler never sees it.

It only reads what’s in the raw HTML source of your page. If your structured data is server-side rendered, it’s visible. If it’s injected by a JavaScript-based app after the page loads, it’s not.

This single technical detail determines whether ChatGPT can access your product information at all.

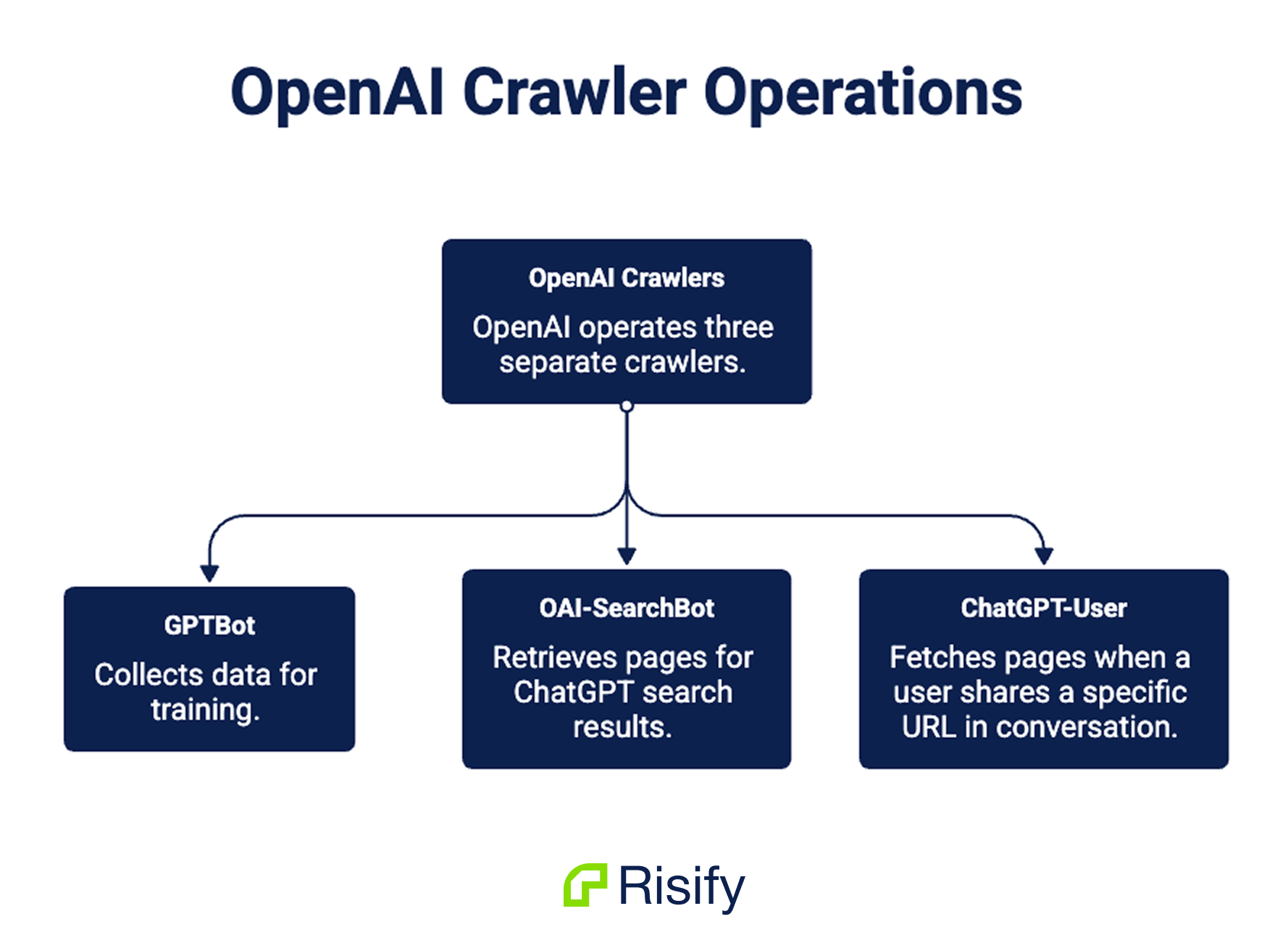

OpenAI operates three separate crawlers, each with a different job:

- GPTBot - collects data for training

- OAI-SearchBot - retrieves pages for ChatGPT search results

- ChatGPT-User - fetches pages when a user shares a specific URL in conversation

All three share the same limitation: no JavaScript execution.

The Same Query in Perplexity

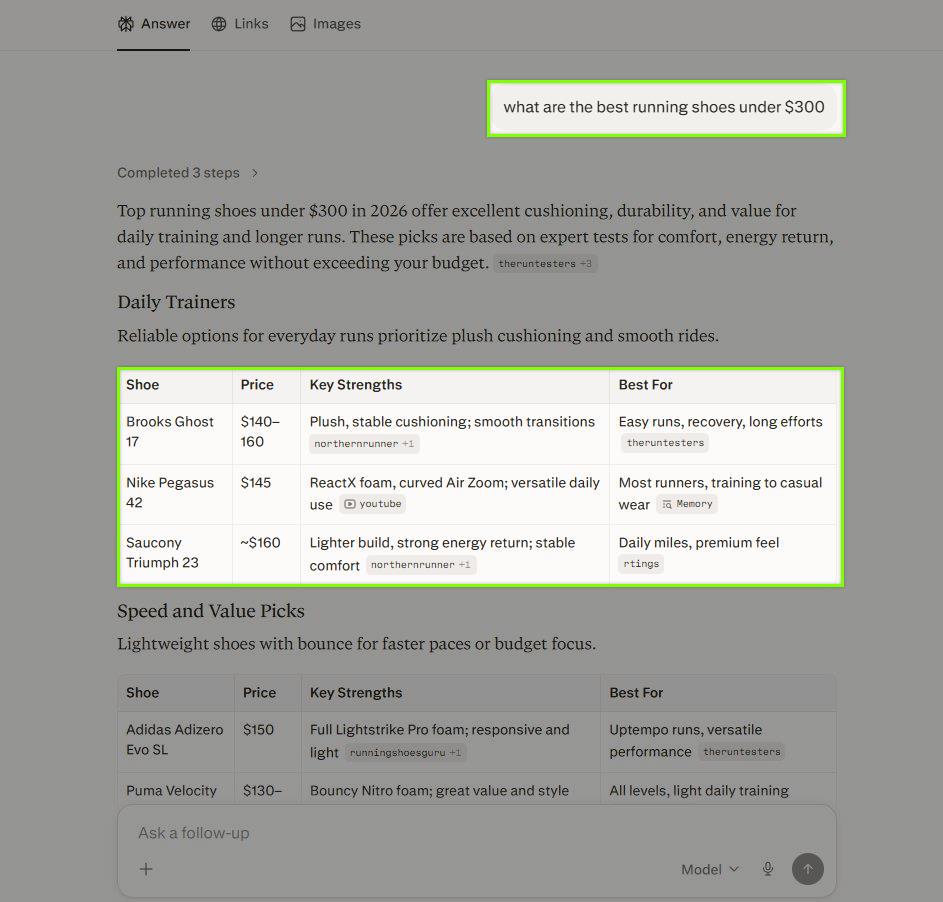

Take the same query - “best running shoes under $300” - and put it into Perplexity.

The output looks different from ChatGPT, and the retrieval works differently too.

Perplexity answers with inline citations numbered throughout the text. Each number links to a specific source page. Below the answer, it lists all sources with titles and URLs. It also suggests follow-up questions the shopper might want to ask next.

Where ChatGPT generates a flowing paragraph with links at the end, Perplexity is more explicit about where each claim comes from. Every product name, every price, every feature claim is tied to a numbered source.

Perplexity is also more aggressive about crawling.

Its declared crawler, PerplexityBot, sends an estimated 20-25 million requests per day. But a Cloudflare investigation from August 2025 revealed that Perplexity also operates an undeclared crawler that impersonates Google Chrome on macOS. This stealth crawler sends an additional 3-6 million daily requests, specifically targeting sites that have blocked the official PerplexityBot.

What does this mean for your store?

Perplexity is almost certainly crawling your pages - whether you’ve given it permission or not. The question is whether it finds structured, useful product data when it gets there, or unstructured content it can’t reliably parse.

Perplexity doesn’t publish any documentation on how it uses structured data from websites. But the pattern holds: if your product pages contain clean, structured information in the HTML source, Perplexity can extract it accurately. If that information only exists in a visual layout with no underlying structure, the extraction becomes guesswork.

Google AI Overviews

Google AI Overviews appear at the top of search results for certain queries - above the traditional blue links. When someone searches “best espresso machine under $300,” they may see a generated summary with product recommendations, comparisons, and source citations before they ever reach a single organic result.

This changes the competitive landscape for every Shopify store.

Even if your product page ranks on page one for a keyword, an AI Overview can push it below the fold. And if your page is cited in the AI Overview, it can get clicks from a position that didn’t exist six months ago.

Where does Google pull AI Overview content from?

Google uses a combination of its Knowledge Graph (which contains over 500 billion facts and is heavily populated by structured data from websites), its regular search index, and a retrieval-augmented generation (RAG) process.

Google’s John Mueller confirmed this pipeline at Google Search Central Live Madrid in 2025: the user enters a question, Google Search finds relevant information, that information “grounds” the language model, and the model generates an answer with supporting links.

A BrightEdge study from September 2025 found that 83.3% of pages cited in AI Overviews are not in the traditional top-10 search results.

That means AI Overviews are pulling from a wider pool than regular search rankings. Pages that would never appear on page one of Google can still get cited in an AI Overview - if they have the right signals.

What are those signals?

Google’s official documentation from December 2025 says you don’t need special schema or new markup to appear in AI features. But their own engineers tell a different story in practice.

Ryan Levering, Google’s Software Engineer for Structured Data, stated at Google Search Central Live New York in March 2025: “A lot of our systems run much better with structured data… it’s computationally cheaper than extracting it.”

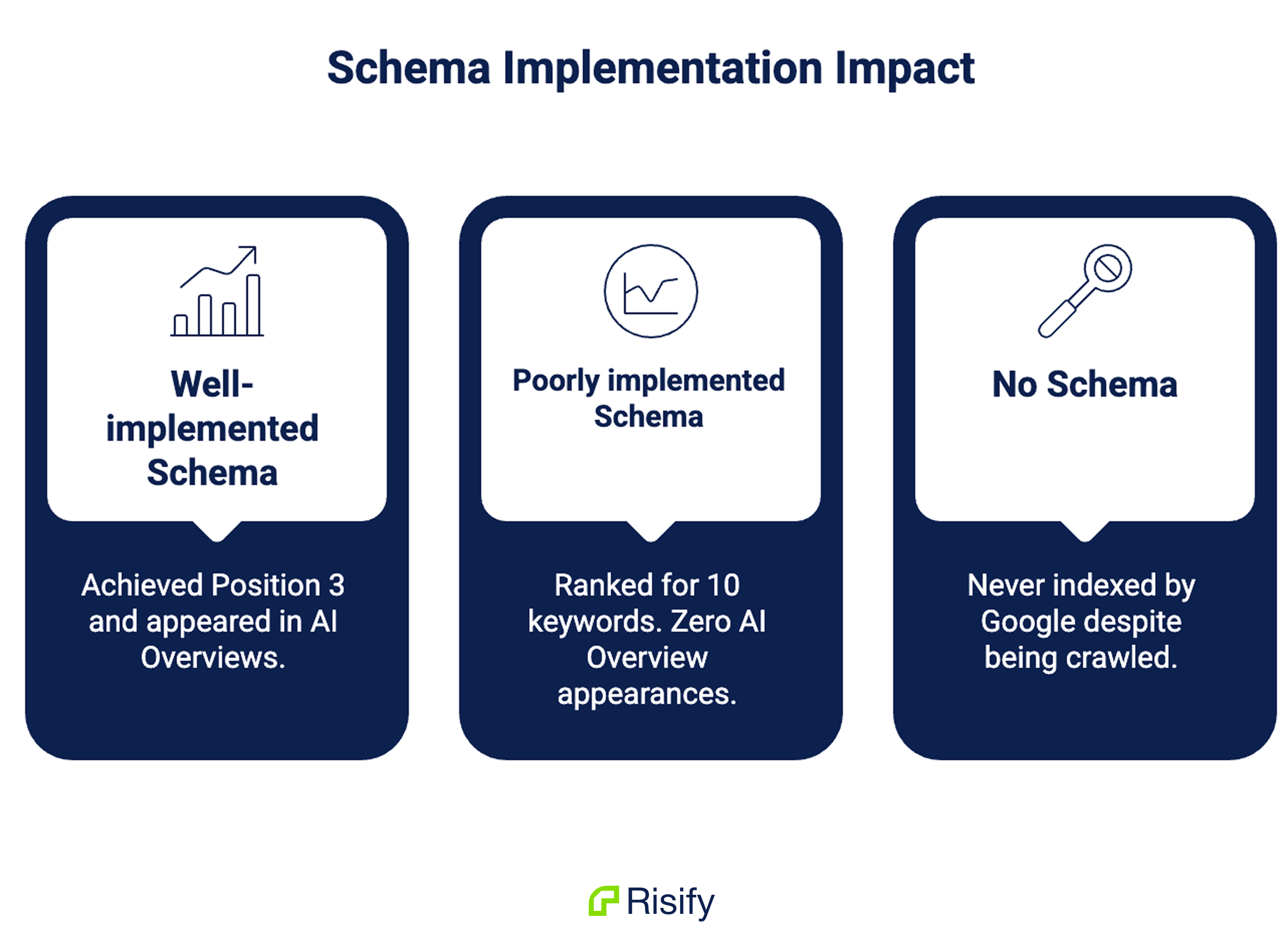

A controlled experiment by Search Engine Land in September 2025 tested three identical single-page sites - one with well-implemented schema, one with poorly implemented schema, and one with no schema. The results:

- Well-implemented schema: The only page to appear in AI Overviews. Achieved Position 3.

- Poorly implemented schema: Ranked for 10 keywords but peaked at Position 8. Zero AI Overview appearances.

- No schema: Never indexed by Google despite being crawled.

Gemini, Bing Copilot, and Voice Assistants

ChatGPT, Perplexity, and Google AI Overviews get the most attention, but other AI systems are also answering product questions.

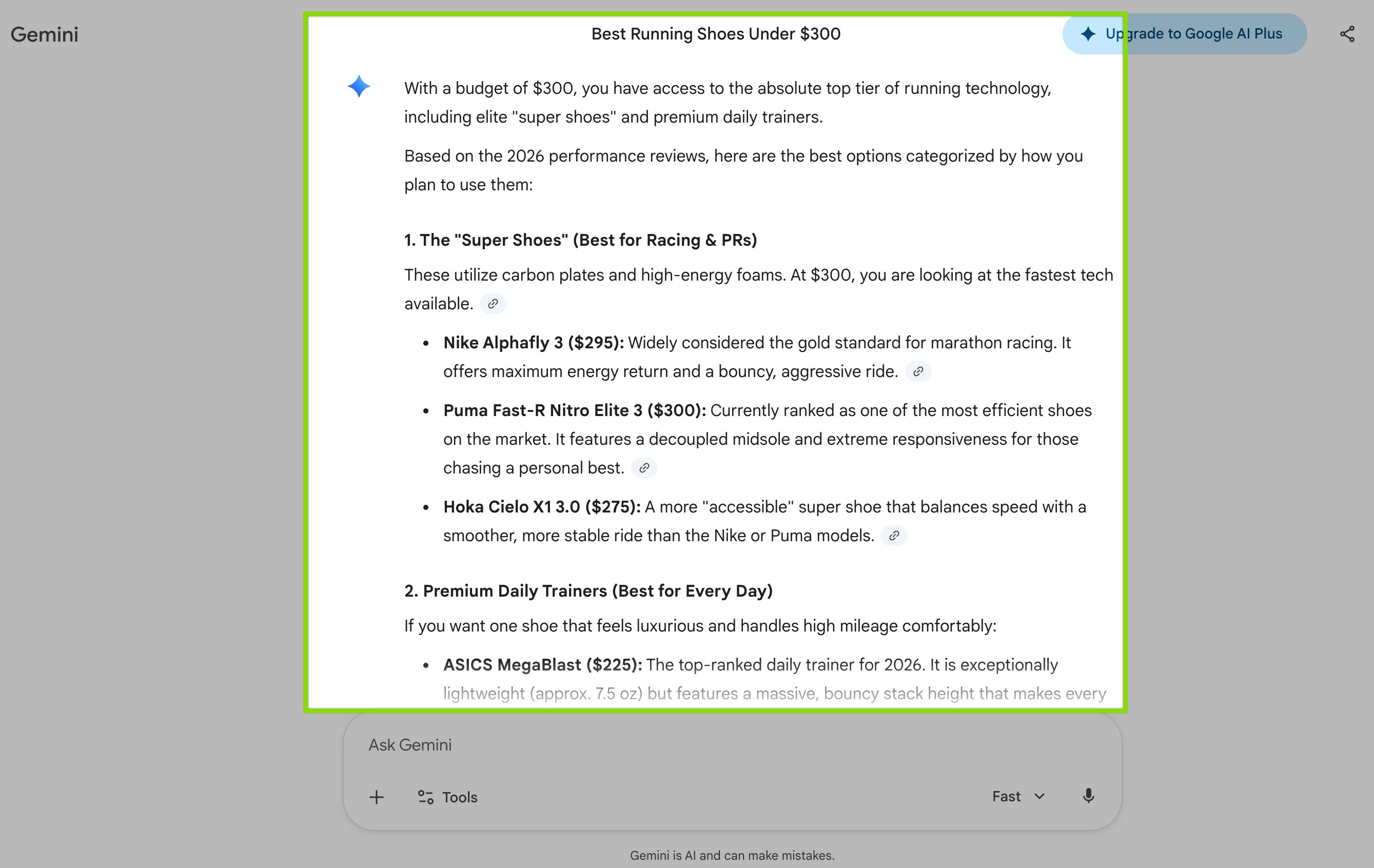

Gemini

Google’s Gemini draws from the same Knowledge Graph and search infrastructure that powers AI Overviews. If your store’s structured data is feeding Google’s systems correctly, it’s available to Gemini as well. The two systems share a foundation, so optimizing for one covers the other.

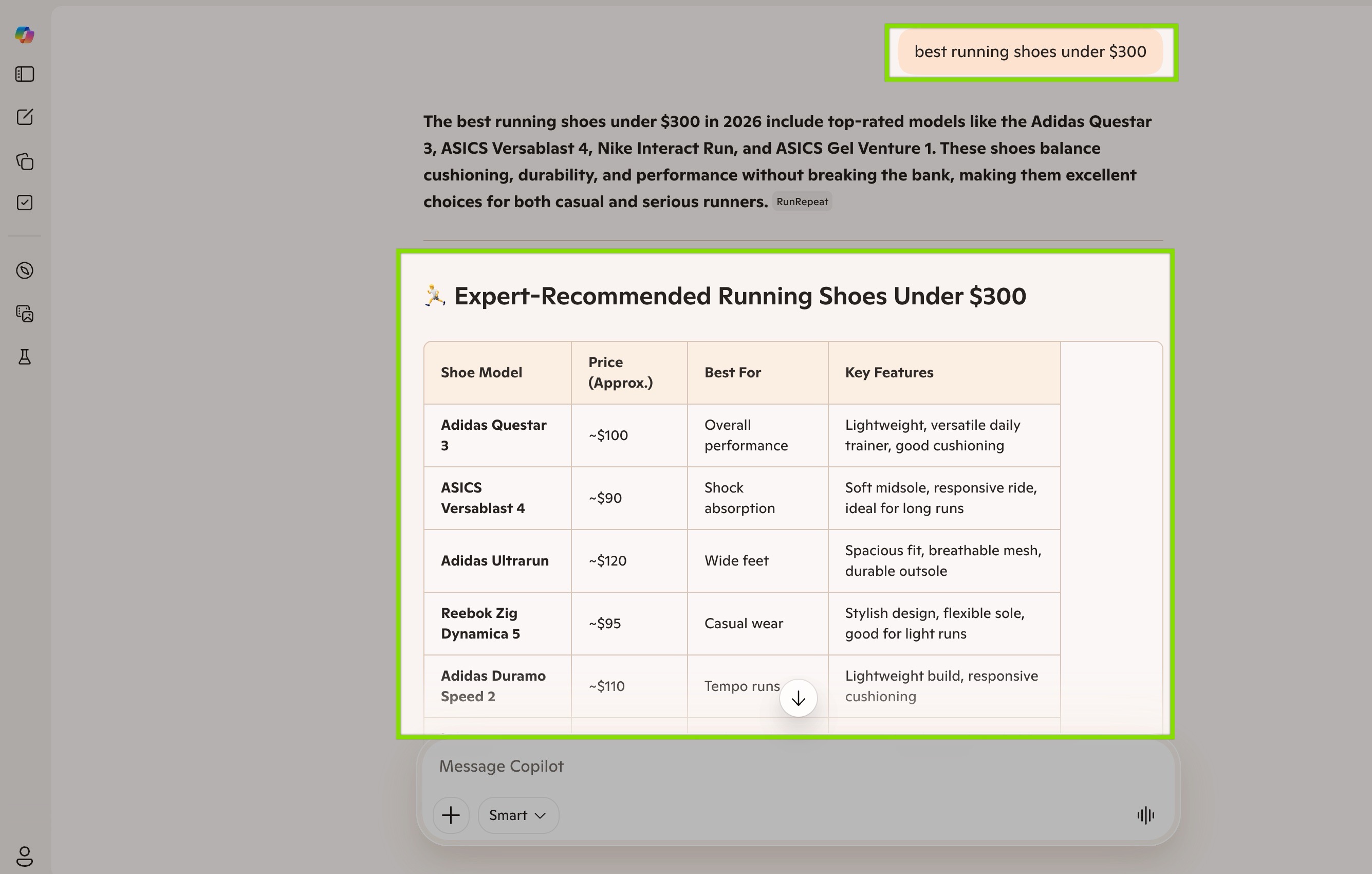

Bing Copilot

Microsoft’s Copilot (formerly Bing Chat) combines the Bing search index with OpenAI’s GPT models through a system called Prometheus. Microsoft describes the key component as “grounding” - using Bing search results to provide the language model with relevant, fresh information.

This is where the strongest official statement about structured data and AI comes from.

Fabrice Canel, Principal Program Manager at Microsoft Bing, stated at SMX Munich in March 2025: “Schema markup helps Microsoft’s LLMs understand content.”

That’s the clearest public statement from any major search engine confirming that structured data directly feeds AI language models.

Bing was also a founding member of Schema.org in 2011 alongside Google, Yahoo, and Yandex. Their webmaster documentation explicitly states: “Bing works hard to understand the content of a page and one of the clues that Bing uses is structured data.”

Voice Assistants

Google Assistant, Alexa, and Siri all rely on structured data when answering product queries by voice.

Voice responses have a unique constraint: they typically need to be 29 words or fewer. The assistant can’t read a full product description out loud - it needs a concise, pre-structured answer. This is exactly what FAQ schema and product schema provide: short, direct data points a voice assistant can extract and read back.

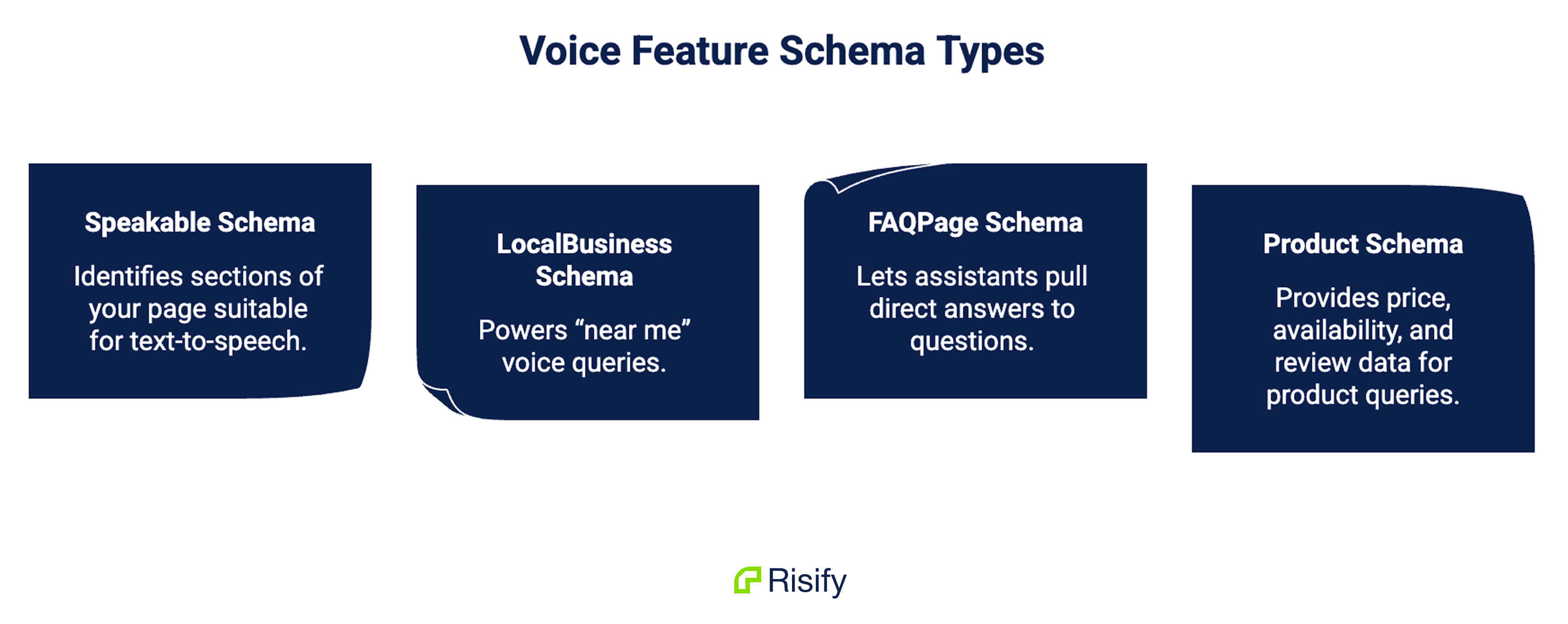

Specific schema types power specific voice features:

- Speakable schema identifies sections of your page suitable for text-to-speech

- LocalBusiness schema powers “near me” voice queries

- FAQPage schema lets assistants pull direct answers to questions

- Product schema provides price, availability, and review data for product queries

What All These Systems Have in Common

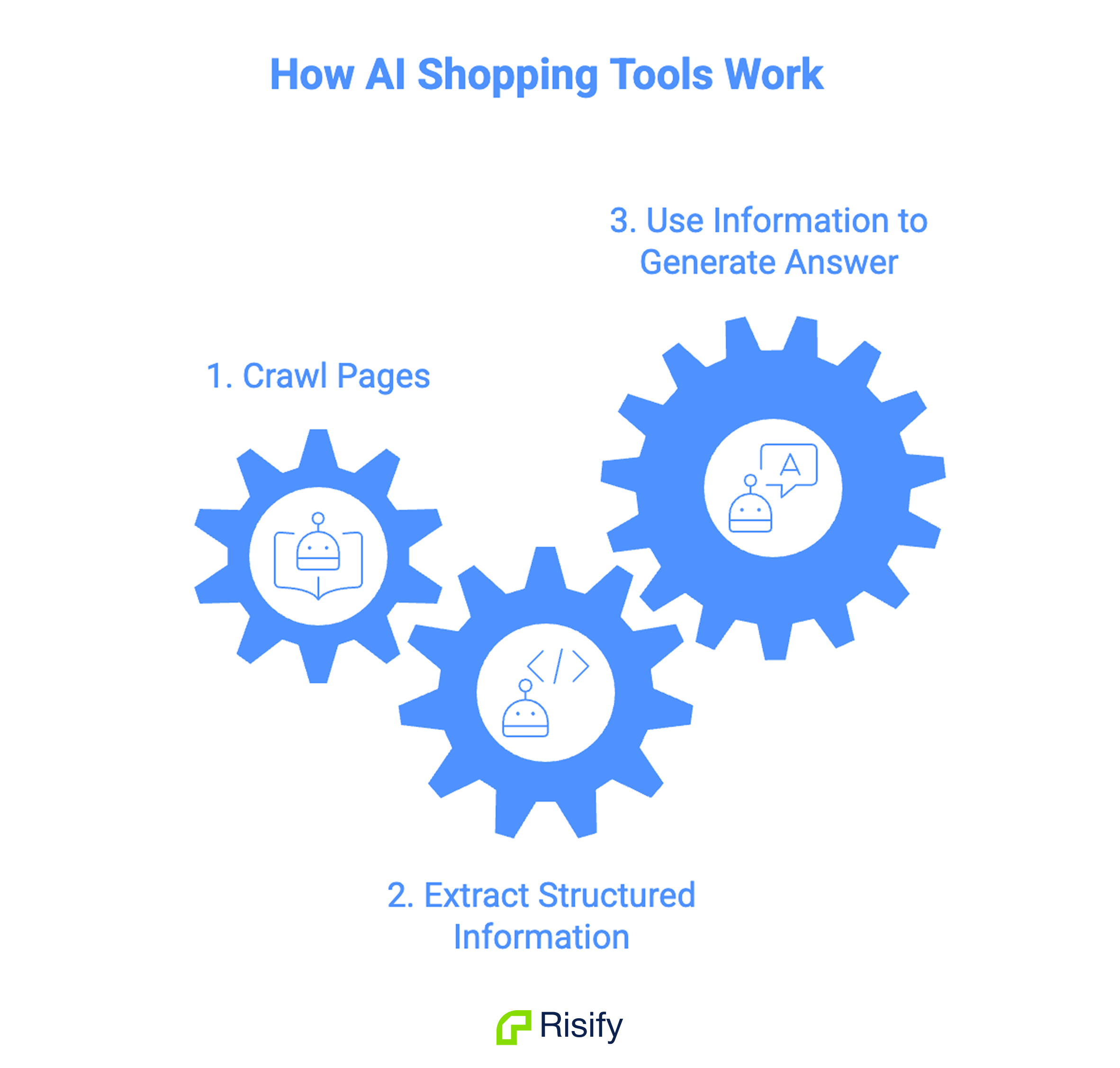

Every AI shopping tool described in this guide - ChatGPT, Perplexity, Google AI Overviews, Gemini, Bing Copilot, voice assistants - follows the same fundamental pattern:

- Crawl your pages (or access them through a search index)

- Extract structured information from the HTML

- Use that information to generate an answer

If step 2 fails - if the crawler finds unstructured content, missing data, or JavaScript-dependent markup it can’t execute - your store drops out of the answer.

The systems differ in how they crawl, how they rank sources, and how they present answers. But they all depend on the same input: structured, server-side rendered data in your page source.

Traditional SEO focused on ranking in a list of ten blue links. AI visibility is about whether your product information gets pulled into a generated answer - across multiple platforms, simultaneously.

A store with proper structured data, FAQ content, and clear site hierarchy is feeding all of these systems at once. A store without it is invisible to all of them.

The next question is obvious.

What exactly do these AI systems read when they land on your page?

That’s what Guide 2 covers: What AI Systems Read From Your Store - the specific data layers AI crawlers extract, how to see what they see, and where most Shopify stores have gaps they don’t know about.

Summary

AI shopping tools are answering product questions with specific recommendations, prices, and links. ChatGPT, Perplexity, Google AI Overviews, Gemini, Bing Copilot, and voice assistants all follow the same core pattern: crawl pages, extract structured data, generate answers.

The critical technical detail is that most AI crawlers don’t execute JavaScript, so only server-side rendered content in your raw HTML is visible to them. Stores with clean structured data feed all these systems simultaneously. Stores without it are excluded from all of them.